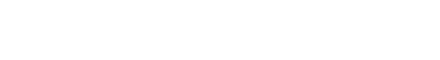

By now you’ve probably seen the headline: LinkedIn is the #2 most cited domain across ChatGPT Search, Perplexity, and Google AI Mode. Marketers are scrambling to “optimize for AI visibility,” vendors are selling new tools weekly, and your Slack channels are full of screenshots.

Here’s what the conversation is mostly missing: the difference between earning a citation and gaming one — and why that difference will determine whether your LinkedIn AI strategy compounds or collapses.

This article is the tactical follow-up to our pillar piece on LinkedIn and AI Search in 2026. If you haven’t read that yet, start there. What follows assumes you understand why visibility alone isn’t the goal. Here we’re going deep on how — specifically the three mechanics most LinkedIn AI guides never mention.

The Problem With Most LinkedIn AI Advice

Most of what’s being written right now about LinkedIn and AI search tells you some version of the same thing: post more, post consistently, write long-form articles, use educational content, build your follower count.

That advice isn’t wrong. The Semrush study of 89,000 cited LinkedIn URLs confirms that frequent posters, original content, and educational framing all correlate with AI citations.

But here’s the gap: that advice treats LinkedIn as a closed loop. Post on LinkedIn → get cited in AI → done.

The reality of how AI citation actually works is far more distributed than that. And if you only optimize inside LinkedIn’s walls, you’re leaving the majority of your citation potential untouched.

There are three moves that separate teams who are building durable AI visibility from teams who are just posting more:

- Earn the citation — don’t manufacture it

- Build the distribution flywheel beyond LinkedIn

- Track the branded prompts your buyers are actually typing

Let’s go through each.

Move 1: Earn the Citation — Don’t Manufacture It

There’s a specific type of content flooding LinkedIn right now. You’ve seen it. The listicle dressed up as insight. The “10 things AI taught me about leadership” post. The agency blog that publishes 50 variations of “we are thought leaders” without ever demonstrating thought leadership. Auto-generated content published at volume, optimized for semantic signals, written for algorithms rather than people.

This content can generate citations. In the short term, it often does. And that’s exactly what makes it dangerous.

Wil Reynolds at Seer Interactive puts it bluntly: AI is summarizing the internet, and beliefs live in people’s heads. When AI cites your content, it pulls forward the language, framing, and conclusions in that content with roughly 0.60 semantic fidelity — meaning AI responses closely mirror what your LinkedIn content actually says. If what your LinkedIn content says is generic, optimized filler, that’s what AI will amplify about you.

You aren’t just optimizing for a ranking. You’re training AI’s opinion of your brand.

What Actually Gets Cited (And Why)

The Semrush data is instructive here. The most-cited LinkedIn content shares a consistent profile:

- Original, not reshared. About 95% of cited posts are original content. Reshares account for just 5% of citations. AI rewards people who add something to the conversation, not people who pass it along.

- Educational, not promotional. Over half of all cited content is knowledge or advice-driven. Content that explains how something works, shares a specific result, or documents a real process outperforms content that announces things.

- Moderate engagement, high relevance. The median cited post has 15–25 reactions. The posts going viral are not the posts getting cited. AI retrieval is not a popularity contest — it rewards relevance to the query.

The example Semrush highlights is telling: one of the top-cited LinkedIn articles in their dataset is a piece where an author draws on firsthand experience to rank the best SEO newsletters and explain each recommendation. It wasn’t a viral post. It wasn’t produced at scale. It was specific, useful, and authoritative — and AI keeps surfacing it because it keeps being the right answer.

The Practical Test Before You Publish

Before you publish any piece of LinkedIn content ask: Would I send this to a client in a DM as a resource? Wil Reynolds frames this perfectly — look through your sent DMs with links. How many of them look like auto-generated listicles? Almost none. Because your reputation is on the line when you make a recommendation. Hold your content to that standard.

If the answer is no, rework it or don’t publish it. Speed-optimized content that doesn’t clear that bar is quietly eroding the brand equity your AI visibility depends on.

Ready to Get Found in AI Search?

The strategy in this article works — but implementation requires expertise, consistency, and ongoing optimization. That's where we come in.

Get Your AI Visibility Audit →Move 2: Build the Distribution Flywheel Beyond LinkedIn

This is the single biggest gap in most LinkedIn AI visibility strategies, and the research makes the opportunity impossible to ignore.

The Citation Lift Study

Stacker partnered with AI visibility platform Scrunch on a study analyzing eight articles across five LLMs and 944 prompt-platform combinations. They measured citation rates for the same stories published only on brand domains versus those same stories distributed across trusted third-party news publishers.

The results:

| Condition | Citation Rate |

|---|---|

| Brand domain only | 7.6% |

| With earned distribution | 34% |

| Citation lift | 325% |

That’s not a marginal improvement. That’s a structural one.

The mechanism is straightforward. When your content lives only on LinkedIn or your company blog, an AI model has one opportunity to encounter it. If your domain doesn’t carry strong topical authority for the query, that single touchpoint may not register.

When the same story appears across multiple trusted publisher domains — earned placements, syndicated articles, industry newsletters, contributed pieces — the model encounters that information pattern in multiple contexts. That repetition across authoritative sources is what signals to AI that this content is worth citing.

Syndicated-only citations are particularly instructive: in the Stacker study, 19.2% of citations came exclusively from third-party versions of the content — the brand’s own domain received no citation credit at all. In nearly one in five answers, earned distribution earned visibility that the brand site never could have generated alone.

What the Distribution Flywheel Looks Like in Practice

The implication is that your LinkedIn content strategy and your PR strategy need to be unified. Here’s how to build that flywheel:

Step 1: Identify your highest-value original content.

Not your most-viewed posts. Your most authoritative ones. Original research, proprietary data, firsthand case studies, documented results. These are the pieces worth distributing because they carry something third-party publishers can actually use.

Step 2: Pitch it as a contributed piece before you post it on LinkedIn.

If you post your original research on LinkedIn first and then try to pitch it to a publication, most editors will pass because it’s no longer exclusive. Flip the sequence. Pitch the insight as a contributed piece or data story, get it placed, then amplify the placement on LinkedIn. Your LinkedIn post links to the authoritative third-party version, which itself links back to your site — both signals compound.

Step 3: Syndicate strategically with canonical tags.

For content that’s already published on your domain, explore syndication partnerships with industry newsletters and publishers who will re-publish with a canonical tag pointing back to your original URL. Traditional search engines follow canonical signals, and since SEO domain authority continues to influence how AI systems assess credibility, clean canonicalization protects your original content while your distributed versions expand citation surface area.

Step 4: Measure citation lift, not just traffic.

The KPI most teams track from earned media is referral traffic. That will always look modest compared to paid or organic. The metric to add alongside it: citation rate in AI responses for your target prompts, measured before and after a distribution push. That’s where the compounding shows up.

The PR-as-GEO Frame

This is a mindset shift worth making explicitly: PR is now a GEO tactic.

Getting your brand mentioned in a respected industry publication used to matter for brand awareness and the occasional backlink. Now it matters because AI systems draw heavily from established news outlets and trusted publisher domains when assembling answers. A placement in an industry publication that AI already treats as authoritative is a citation signal for your brand, not just a traffic signal.

This changes the ROI calculation on PR completely. A placement that sends 200 referral visitors is no longer a modest win. That same placement may be contributing to citation lift across thousands of AI-prompted conversations you’ll never directly observe.

Move 3: Track the Branded Prompts Your Buyers Are Actually Typing

Here’s the prompt that should change how you think about all of this:

“I’m choosing between two PR firms. I’m a tech company focused on GEO. My friends recommended Maven PR and AgileCat. Help me compare them.”

Go look at your AI visibility tracking tool right now. Do you have any prompts that look like that? Most teams don’t — because they’re building their prompt tracking strategy around unbranded category queries, while their actual buyers are entering the decision phase with a brand already in mind, using AI to validate the choice.

Seer Interactive’s UX research found that up to 44% of AI prompts included brand names. Gartner data shows that 77% of B2B purchases start with a network recommendation. The math tells you what’s actually happening: by the time your buyer is prompting AI about your brand, someone they trust has already mentioned you. They’re not discovering you. They’re investigating you.

That’s the prompt that matters more than any category query — and it’s the prompt most teams are completely blind to.

The Branded Prompt Audit

Run this exercise across ChatGPT, Perplexity, and Google AI Mode:

Discovery prompts (for awareness)

- “[Your category] for [your target audience]”

- “Best [your service] companies”

- “How to [solve the problem you solve]”

Comparison prompts (where decisions happen)

- “[Your brand] vs. [Competitor A] vs. [Competitor B]”

- “My colleague recommended [Your brand], what do I need to know?”

- “Is [Your brand] good for [specific use case]?”

Validation prompts (post-referral)

- “[Your brand] reviews”

- “What is [Your brand] known for?”

- “Who uses [Your brand]?”

Score each response against three criteria:

- Is the information accurate?

- Does it reflect your actual positioning?

- Would it reinforce or undermine a warm referral?

The gaps you find are your content brief. Not keyword gaps. Not topical gaps. Narrative gaps — places where what AI is saying about you doesn’t match what you want to be known for, or doesn’t match the level of credibility a buyer needs to move forward.

AI Citation Strategy Benchmark Table | |||||

|---|---|---|---|---|---|

| Strategy Type | Effort Level | Citation Impact | Time to Results | Risk Level | Long-Term Value |

| LinkedIn Posting Only | Low | Low | Medium | Low | Low |

| High-Volume AI Content | Low | Medium (short-term) | Fast | High | Very Low |

| Original Authority Content | Medium | Medium–High | Medium | Low | High |

| Authority Content + Distribution | High | Very High | Medium | Low | Very High |

| Full Strategy (Content + Distribution + Prompt Tracking) | High | Maximum | Medium–Long | Low | Maximum |

Web Data vs. Training Data: A Gap Worth Tracking

Seer built a tool to compare how a brand appears in AI responses when web search is enabled versus when AI is drawing purely from training data. This distinction matters because:

- Training data reflects what AI learned about your brand during model training — accumulated over time from all available public sources

- Live web data reflects what AI can find right now when given access to search

If you perform significantly better when web search is enabled, that means your recent content and earned placements are working — but they haven’t yet influenced the model’s underlying knowledge of your brand. Your GEO strategy should include both: building current web presence that AI can retrieve today, and building the kind of durable, widely-distributed brand record that shapes training data over time.

If you perform better from training data than from live web, that’s a different signal — your historical brand equity is strong but your recent content isn’t reinforcing it. Time to close that gap.

Putting the Three Moves Together

Here’s how these three moves compound on each other in practice:

A team doing Move 1 alone publishes quality original content on LinkedIn consistently. They earn some citations. They’re building credibility. But their citation surface area is capped by LinkedIn’s single-domain authority, and they have no visibility into how their brand is performing in the comparison prompts that precede purchases.

A team doing Moves 1 and 2 creates that same quality content and distributes it through earned media placements. Their citation rate is now potentially 4x what it would be from LinkedIn alone. AI encounters their content in more trusted contexts and surfaces it more frequently.

A team doing all three moves earns citations, distributes them across multiple authoritative domains, and tracks the branded prompts where buying decisions are actually being made. They know not just whether they’re being cited — but whether those citations are converting to trust, and whether their narrative in AI matches the brand they’re trying to build.

That third team isn’t just optimizing for AI visibility. They’re building a brand that compounds — one that earns word-of-mouth referrals, shows up accurately when AI is consulted, and reinforces the recommendation rather than undermining it.

Download Available – The AI Citation Playbook

A Note on the Long Game

There’s real tension in this space right now between short-term tactics that generate visible metrics quickly and long-term strategies that build something durable.

The short-term tactics aren’t without merit. Volume-based content can earn citations. Keyword-dense articles can generate AI impressions. If your goal is a screenshot for next quarter’s report, these approaches work.

But every piece of generic, algorithmically-optimized content you publish is training AI’s description of your brand. Every shortcut you take in content quality is a data point in the model’s understanding of what you stand for. And every citation earned by content that doesn’t actually represent your best work is a citation that might get you seen without getting you believed.

The teams that will win in AI search over the next three years aren’t the ones who move fastest. They’re the ones who build the most credible, widely-distributed, narratively-consistent body of work. The ones who treat citation lift not as a traffic hack but as the natural result of being the most authoritative source on the things they actually know best.

Earn the citation. Distribute the content. Track what buyers actually search. The playbook isn’t complicated. It’s just harder than it looks.

This is Part 2 in thinkdmg.com’s series on LinkedIn, AI search, and the future of brand visibility. Read the full foundation in Part 1: LinkedIn and AI Search in 2026 — The Complete Playbook.

AI Search & LinkedIn Strategy Series

- Part 1: LinkedIn and AI Search in 2026 — The Complete Playbook for Visibility, Trust, and Getting Chosen

- Part 2: The LinkedIn AI Citation Playbook Nobody’s Talking About — How to Earn It Instead of Game It

- Part 3: Stop Optimizing for AI. Start Optimizing for the Person Who Will Prompt AI About You

- Part 4: LinkedIn Gets You Seen. Here’s What Actually Gets You Chosen

Sources: Semrush LinkedIn AI Visibility Study (March 2026), Stacker/Scrunch Citation Lift Study (December 2025), Seer Interactive GEO Research (March 2026), Gartner B2B Buying Research.

Here’s what the conversation is mostly missing: the difference between earning a citation and gaming one — and why that difference will determine whether your LinkedIn AI strategy compounds or collapses.

This article is the tactical follow-up to our pillar piece on LinkedIn and AI Search in 2026. If you haven’t read that yet, start there. What follows assumes you understand why visibility alone isn’t the goal. Here we’re going deep on how — specifically the three mechanics most LinkedIn AI guides never mention.